1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

| from snownlp import SnowNLP

import csv

texts=[]

def read_file():

with open('../data.csv', 'r', encoding='utf-8') as file:

reader = csv.reader(file)

next(reader)

for row in reader:

texts.append(row[1])

def emotion():

num =0

positive = 0

negative = 0

neutral = 0

for text in texts:

s = SnowNLP(text)

sentiment_score = s.sentiments

print(f"文本: {text}")

print(f"情感分数: {sentiment_score:.4f}")

sentiment_label = '积极' if sentiment_score > 0.6 else '消极' if sentiment_score < 0.4 else '中性'

print(f"情感倾向: {sentiment_label}")

num+=1

if sentiment_score > 0.6:

positive += 1

elif sentiment_score < 0.4:

negative += 1

else:

neutral += 1

return [positive,neutral,negative,num]

if __name__ == '__main__':

read_file()

print(f'分数为{emotion()}')

···

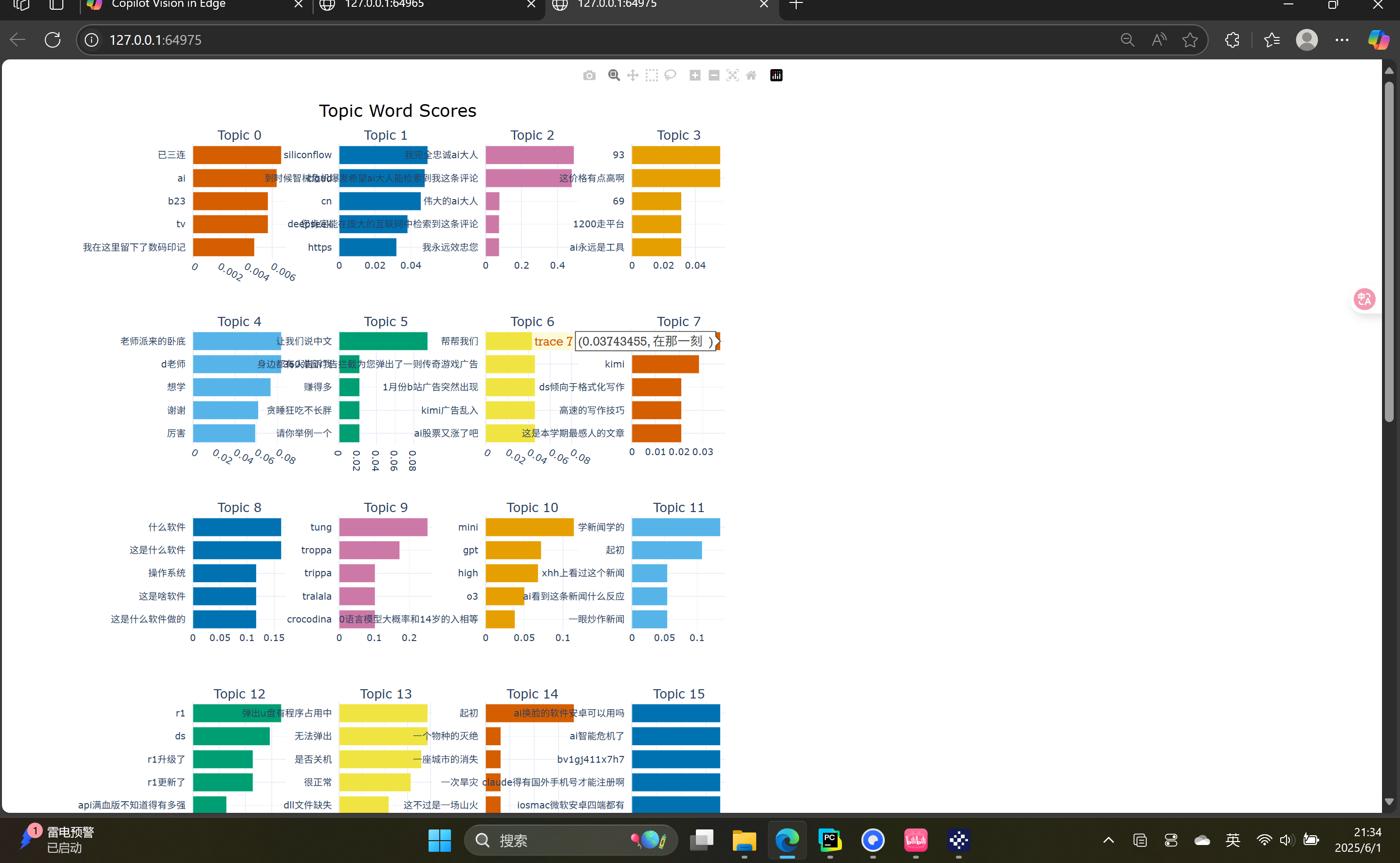

这是lda模块,可以进行主题分析后可视化

···lda

from bertopic import BERTopic

import jieba

import re

import csv

from sentence_transformers import SentenceTransformer

raw_data = []

with open('../data.csv', mode='r', encoding='utf-8-sig') as f:

reader = csv.DictReader(f)

for row in reader:

keywords = {

'大模型', '生成式AI', 'chatgpt', 'deepseek', '人工智能',

'机器学习', '神经网络', '深度学习', '自然语言处理', '计算机视觉',

'AI', 'GPT', 'LLM', 'CV', 'NLP', '模型', '算法', '训练', '推理', '参数', '调参',

'识别', '生成', '预测', '推荐', '智能', '自动', '替代', '就业'

}

if any(keyword in row['内容'] for keyword in keywords):

print(row)

raw_data.append(row)

print(raw_data)

embedding_model = SentenceTransformer("paraphrase-multilingual-MiniLM-L12-v2")

def is_valid_text(text):

"""判断文本是否有效"""

meaningless_keywords = {"三连", "666", "点赞", "关注", "转发", "支持一下", "一键三连"}

words = set(jieba.cut(text))

if words.issubset(meaningless_keywords):

return False

if len(text.strip()) < 5:

return False

return True

def preprocess_text(text):

"""清洗文本:移除表情符号、特 殊字符等"""

text = re.sub(r'\[.*?]', '', text)

text = re.sub(r'@\S+', '', text)

text = re.sub(r'\s+', ' ', text).strip()

return text

documents = []

for item in raw_data:

if isinstance(item, dict) and '内容' in item:

cleaned_text = preprocess_text(item['内容'])

if is_valid_text(cleaned_text):

documents.append(cleaned_text)

print(f"提取到 {len(documents)} 条有效评论")

def chinese_tokenizer(text):

"""使用结巴分词进行中文分词"""

return list(jieba.cut(text))

model = BERTopic(language="chinese (simplified)",

nr_topics = 'auto',

embedding_model=embedding_model

)

topics, probs = model.fit_transform(documents)

topic_info = model.get_topic_info()

print("\n主题分布:")

print(topic_info)

def generate_topic_labels(model, top_n=3):

topic_labels = {}

for topic_id in range(model.get_topic_info().shape[0]):

if topic_id == -1:

continue

keywords = model.get_topic(topic_id)

if keywords and len(keywords) >= top_n:

label = "_".join([word for word, _ in keywords[:top_n]])

topic_labels[topic_id] = label

else:

topic_labels[topic_id] = f"topic_{topic_id}"

return topic_labels

topic_labels = generate_topic_labels(model, top_n=3)

model.set_topic_labels(topic_labels)

fig1 = model.visualize_topics(custom_labels=True)

fig2 = model.visualize_barchart(top_n_topics=30, width=400, height=200)

fig1.show()

fig2.show()

|